Is time running out for Wikipedia?

Former Wikimedia trustees are sounding the alarm: Wikipedia must adapt to AI or risk irrelevance. Read more in this edition of WikiWise.

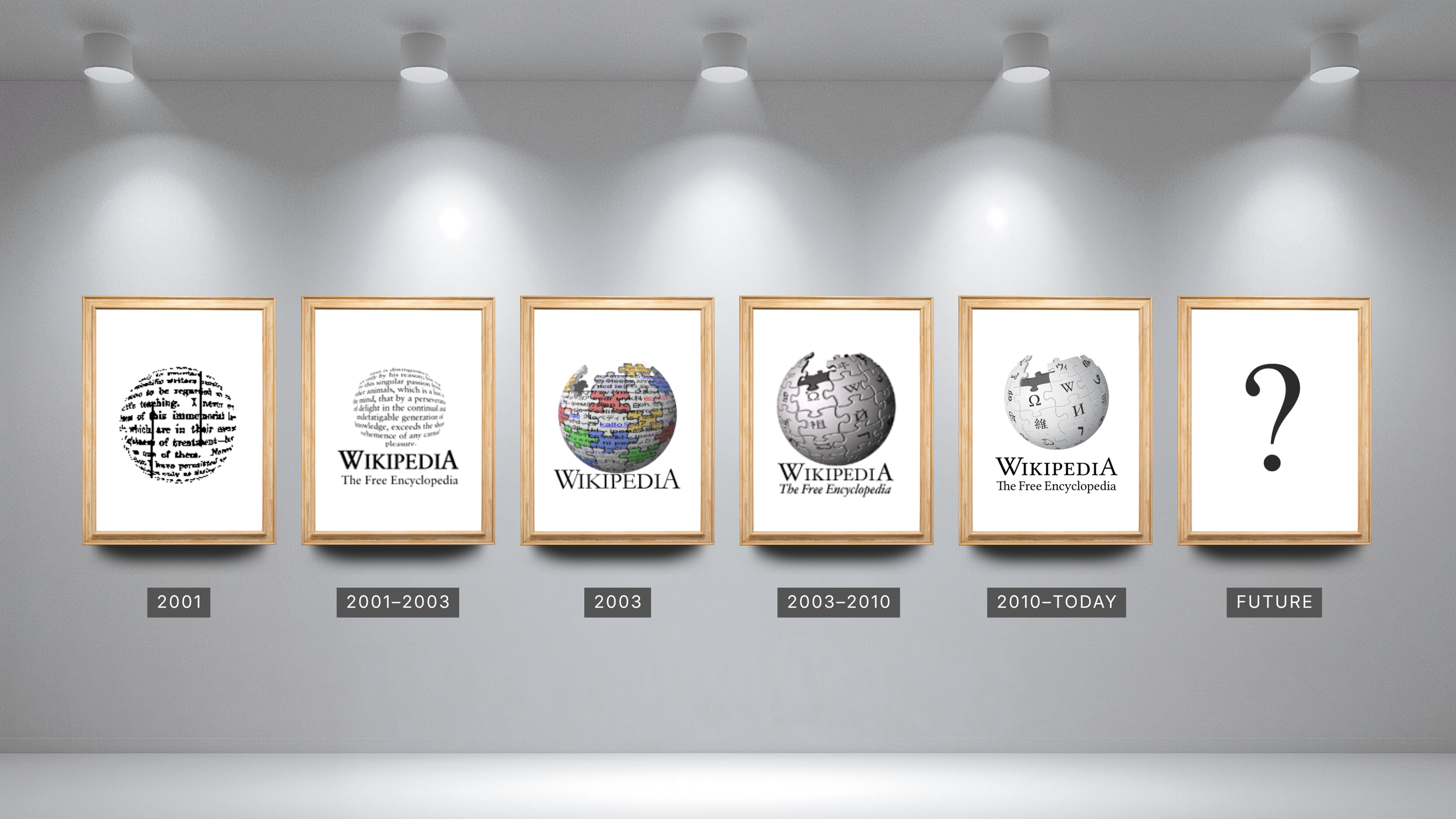

Wikipedia logos, Wikimedia Foundation, CC BY-SA 3.0, via Wikimedia Commons

🔔 Wiki Briefing

Former trustees warn Wikipedia: evolve now or get left behind

Wikipedia's 25th birthday last month sparked plenty of speculation about what's next for the online encyclopedia, and none so dire as warnings by Christophe Henner and Dariusz Jemelniak, two former trustees of the Wikimedia Foundation.

Henner (and his co-author, the AI chatbot Claude) was the most scathing. In his assessment for the Wikipedia-published newspaper The Signpost, Henner accused Wikipedia of leaving the Global South behind while catering to an out-of-touch old guard. Henner claims the rise of AI search and changes in how young people consume content have led to a major decline in views. By digging in their heels and stubbornly refusing to engage with the AI world and the growing Internet audience, Wikipedia will get left behind.

Wikipedia's value, Henner argues, comes not from the content, but the fact it is verified content, something AI is incapable of creating. His solution? Wikipedia should become a "verification layer" for AI outputs.

Jemelniak, writing for the IEEE, was more measured, but still sees warning signs: a trend away from young people editing Wikipedia, and a hidebound community unwilling to adopt new tools and adapt to popular modes of information consumption.

📰 In the News

Epstein, the UK government, and the infinite spotlight of Wikipedia

Scandal has rocked the government of UK Prime Minister Keir Starmer to start 2026. Perhaps the most explosive revelations come from the archives of Jeffrey Epstein. The investigation into the convicted sex offender's extensive contact list and trove of documents surfaced new communications with the UK's ambassador to the U.S., Peter Mandelson, which led to the ambassador's sacking in September 2025.

Soon after, The Bureau of Investigative Journalism reported a flurry of activity that painted Mandelson in a more positive light and tried to downplay the ex-ambassador's Epstein connection. The Bureau reported the editor to Wikipedia volunteers, who did their own investigation, ultimately banning two accounts for undisclosed conflict of interest (black-hat) paid editing in January. The content in question was added back to Mandelson's article.

Fallout from the Epstein files continued to rock the Starmer government, with communications director Tim Allan resigning days after further revelations on the close relationship between Mandelson and Epstein. Allan is also tied to a 2026 Wikipedia scandal, with the firm he founded, Portland Communications, getting implicated in a black-hat scheme stretching back more than a decade.

In the first of its 2026 Wikipedia investigations, The Bureau of Investigative Journalism reported Portland Communications and a subcontractor engaged in black-hat editing since at least the early 2010s. Topics included the Qatari government and business leaders in advance of the 2022 FIFA World Cup, the Gaddafi regime, and the association between Stella Artois and domestic violence.

As another UK PR exec later argued in PR Week, the trust placed in Wikipedia matters, more so than ever given its role in AI search. The Bureau's reporting shows that trust is partially based on Wikipedia's eternal editing record, and the truth will always eventually come out.

📚 Research Report

Pay no attention to the Grok behind the curtain

After its initial launch, Grokipedia carved out a space for humans to propose edits on the AI encyclopedia, but they aren't all that interested in contributing. By December, the Columbia Journalism Review reported more than 75% of all suggested edits to Grokipedia are coming from the AI backbone rather than human beings. Research released this month by the Tow Center found human suggestions typically fare better, as Grokipedia approves Grok's suggested edits at a slightly lower rate (about two-thirds) than those submitted by humans (70%). It's a twist on what the trustees wanted: where Wikipedia leans ever more into the human-verified, Grokipedia shifts further away.

The AI train doesn't end there; some of Grok's suggestions approved by Grok then make their way to ChatGPT or Google search results. So while Wikipedians are worried about whether they can stay relevant, Grokipedia is cutting out the middle man entirely.

You can dig into the data here.

🧩 Wikipedia Facts

Wikipedia has a theme song! A parody of the Eagles classic "Hotel California", "Hotel Wikipedia" is chock-full of obscure references to Wikipedia's inner workings. How many do you recognize?

💡 Tips & Tricks

Ever find yourself doom-scrolling and wish it was more… educational? Good news: Xikipedia creates a social media-like feed from Simple Wikipedia, giving users bite-sized snippets on the things they're most interested in with links back to the main articles on Wikipedia.